Exploring photogrammetry and NeRFs with OPF

Photogrammetry and Neural Radiance Fields (NeRF) are transforming the way we capture and render 3D scenes. In this article, we will explore the power of the Open Photogrammetry Format (OPF) and how it integrates seamlessly with NeRF. You’ll discover how these technologies revolutionize photogrammetry workflows, opening new possibilities for collaboration and research.

The information in this article was provided by Grégoire Krähenbühl, Fayez Lahoud, Tomas Hynek, and Taras Pavliv.

Understanding OPF and NeRF

The Power of OPF: Unlocking Collaboration in Photogrammetry

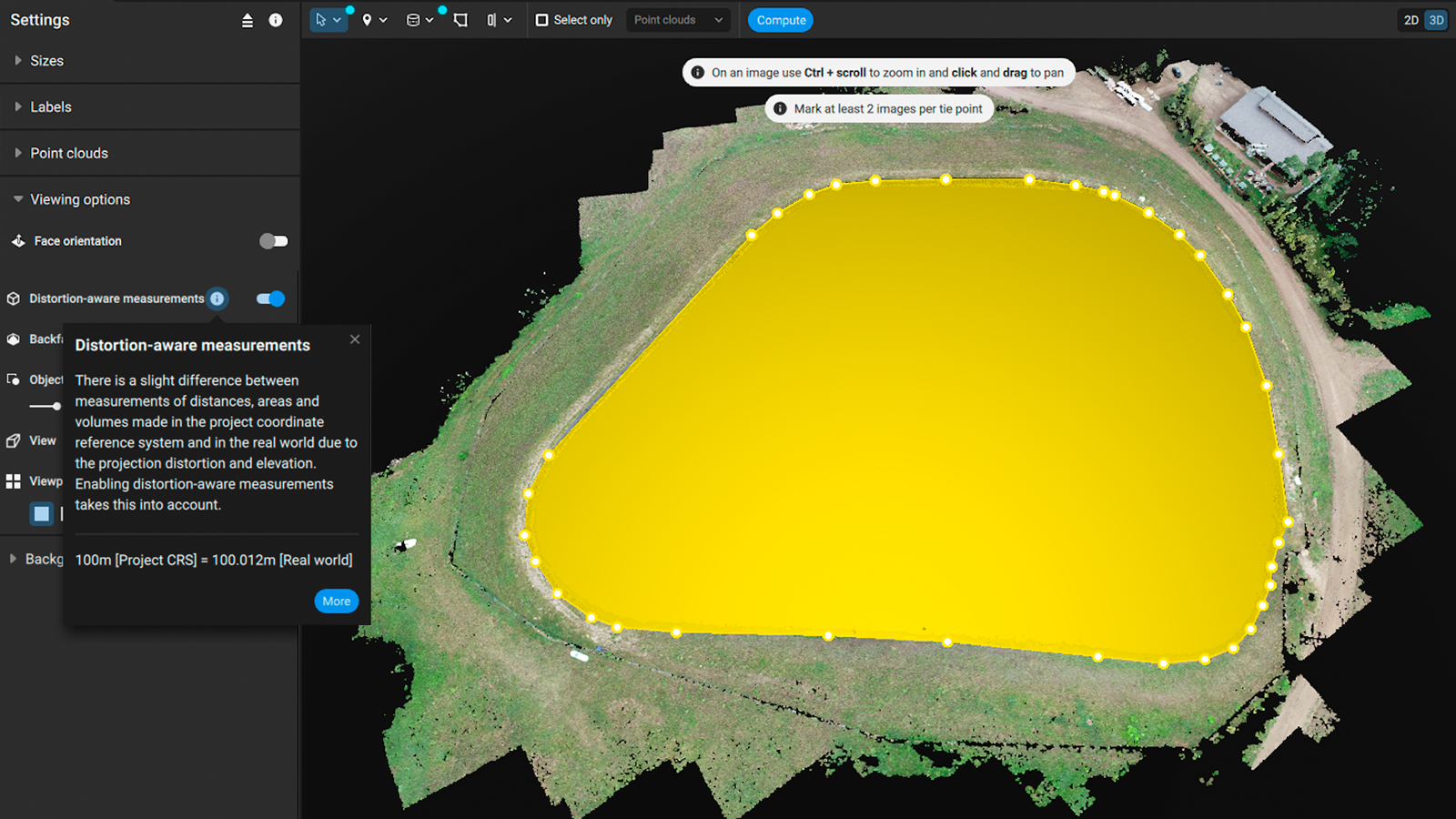

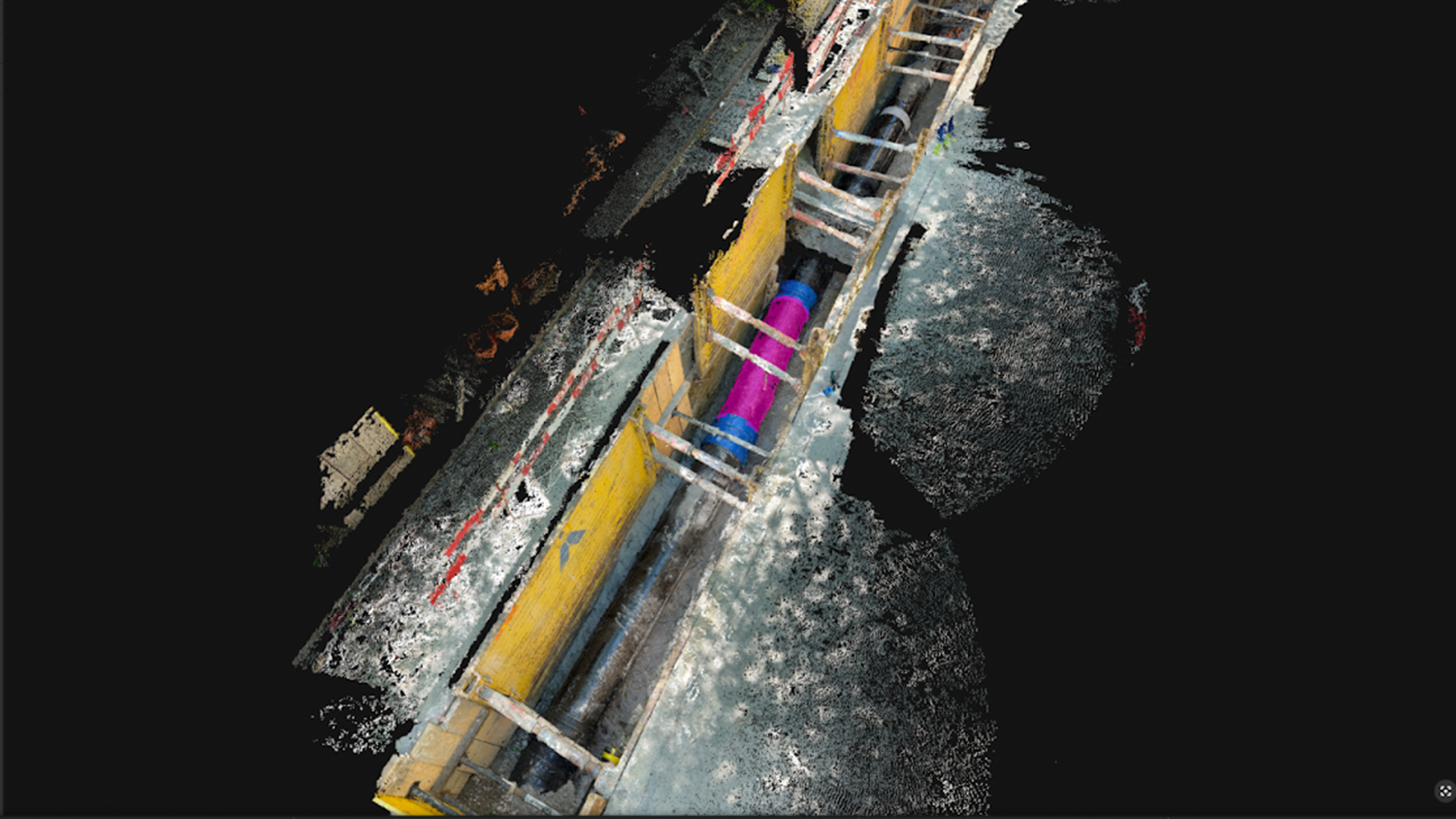

Photogrammetry projects often face challenges when it comes to data exchange and collaboration. OPF addresses these issues by providing a standardized format that ensures compatibility between different software and enables smooth collaboration among researchers and professionals. OPF’s goals include enhancing data interoperability, simplifying workflows, and promoting efficient collaboration in the photogrammetry community.

Neural radiance fields

Generating novel views of scenes is a major challenge for computer graphics, and it's a necessity when building immersive virtual realities, beautiful world designs, and creating gameplay experiences. The synthesis of a novel view requires a significant amount of data, such as 3D modeling, textures, shading, reflections, lighting, etc. Because generating and maintaining these assets demands a lot of resources, this is a highly researched topic.

Neural Radiance Fields (NeRFs) represent a state-of-the-art solution to this problem, promising to alleviate many of the current technique's challenges. The term Neural Radiance Field describes the three elements at the center of this technique:

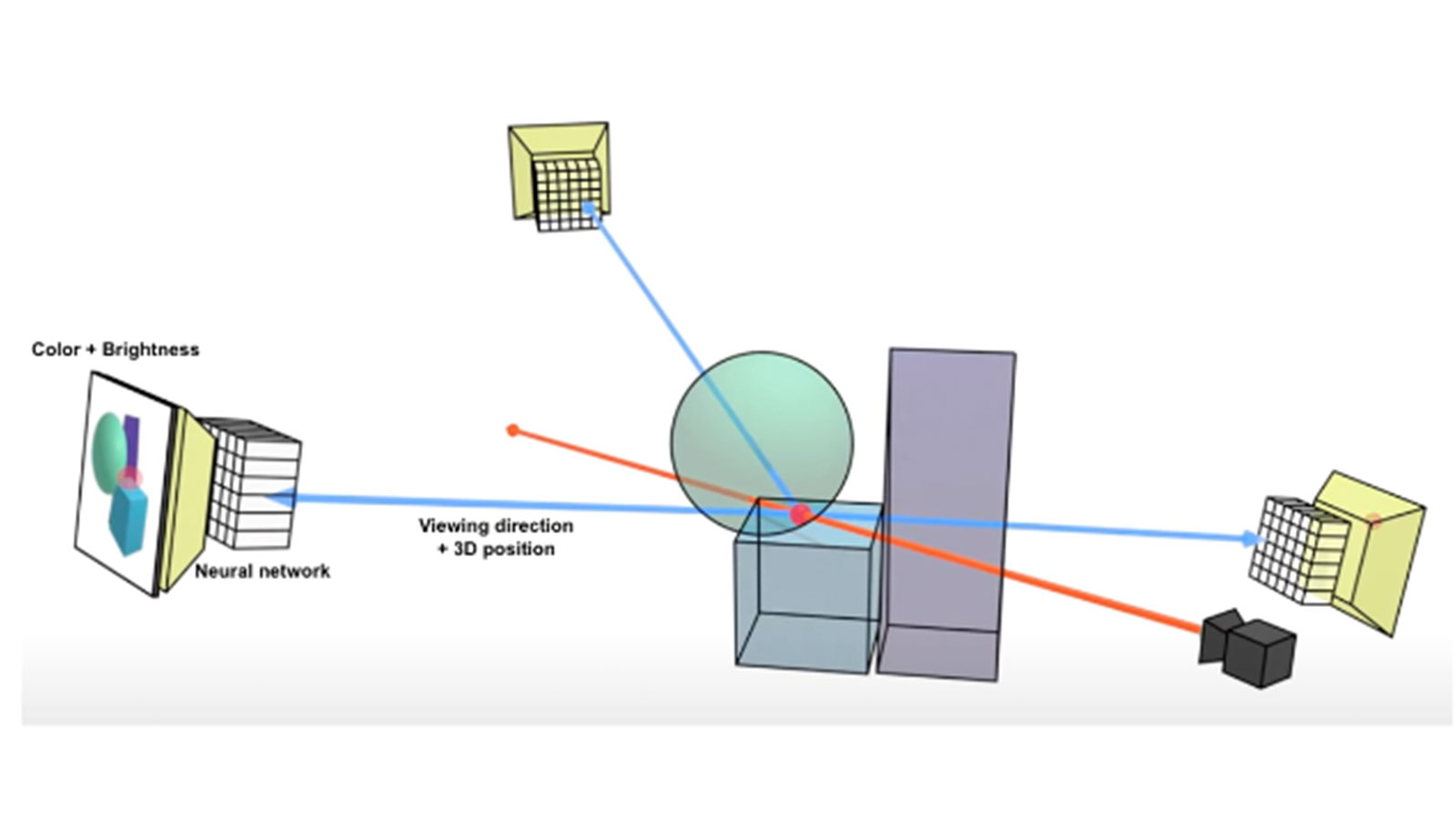

1 - Field is a mathematical mapping, transforming a set of inputs into a set of outputs following a specific structure 2 - Radiance refers to the fact that the mapping transforms 3D positions and viewing directions into color and brightness of light rays 3 - Neural indicates that the mathematical mapping is based on a neural network architecture.

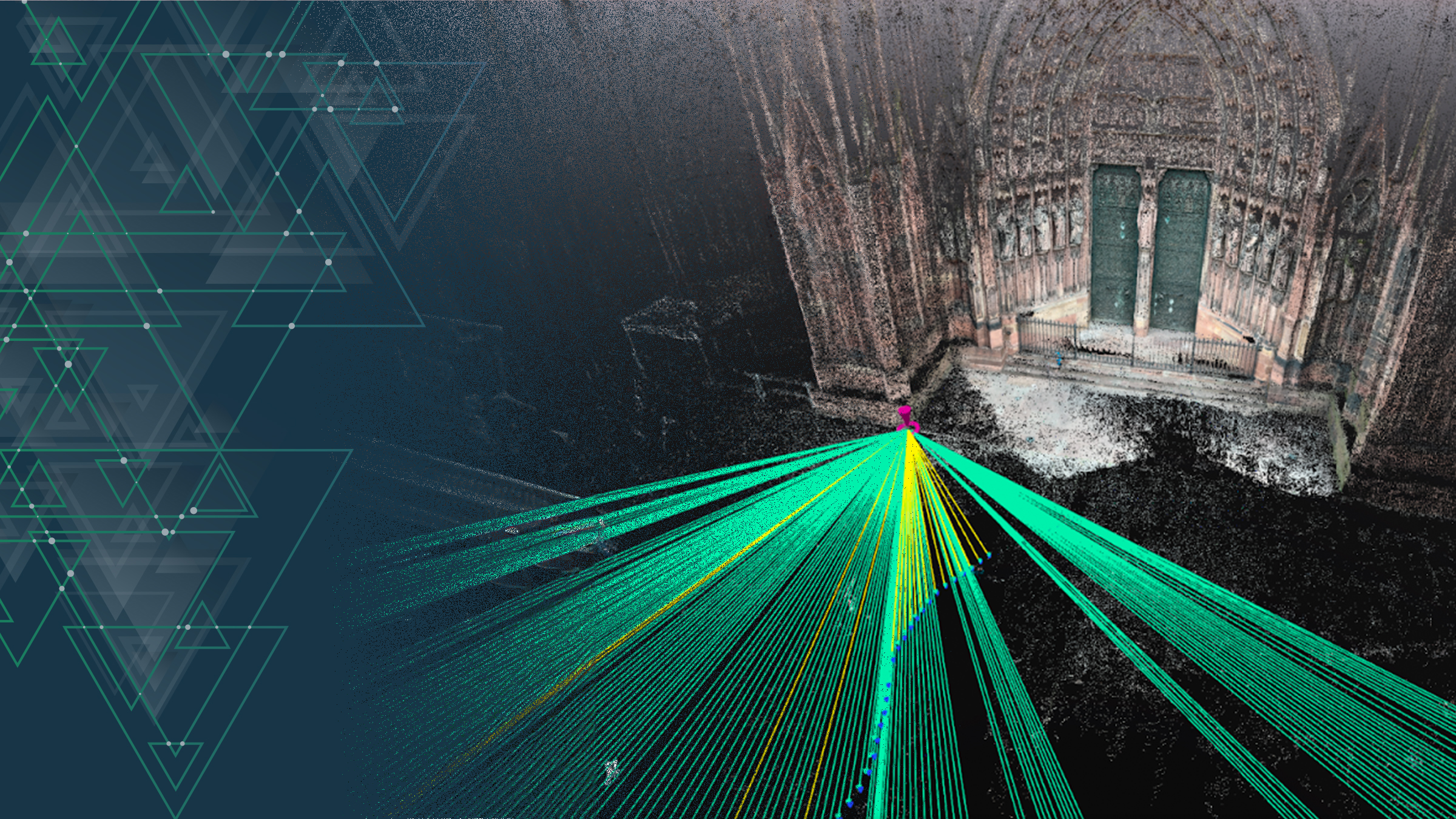

A neural radiance field answers the question: what do I see if I look at position X from direction Y? This can be seen in the image where the neural network is trained from camera samples (red ray) to be able to predict colors and brightness values from new viewing directions.

By operating directly on radiance fields, which characterize shapes, textures, and material effects, NeRFs can learn and encode all the assets of a scene at the same time. This reduces resource requirements while still being able to create highly detailed 3D reconstructions and realistic renderings, allowing NeRFs to enable better immersive virtual tours, 3D object manipulation, detailed digital twin analysis, and much more.

Practical Workflow Example

Acquiring the dataset: PIX4Dcatch and calibration with PIX4Dmatic

Like photogrammetry, NeRFs require accurately collecting image datasets with a high level of overlap between images, and then calibrating them to obtain high-quality renders. One of the best ways to capture high-quality terrestrial imagery using a mobile device is PIX4Dcatch! PIX4Dcatch is a free-to-use, user-friendly mobile application for capturing images in the field. You can install it on any iOS and Android device.

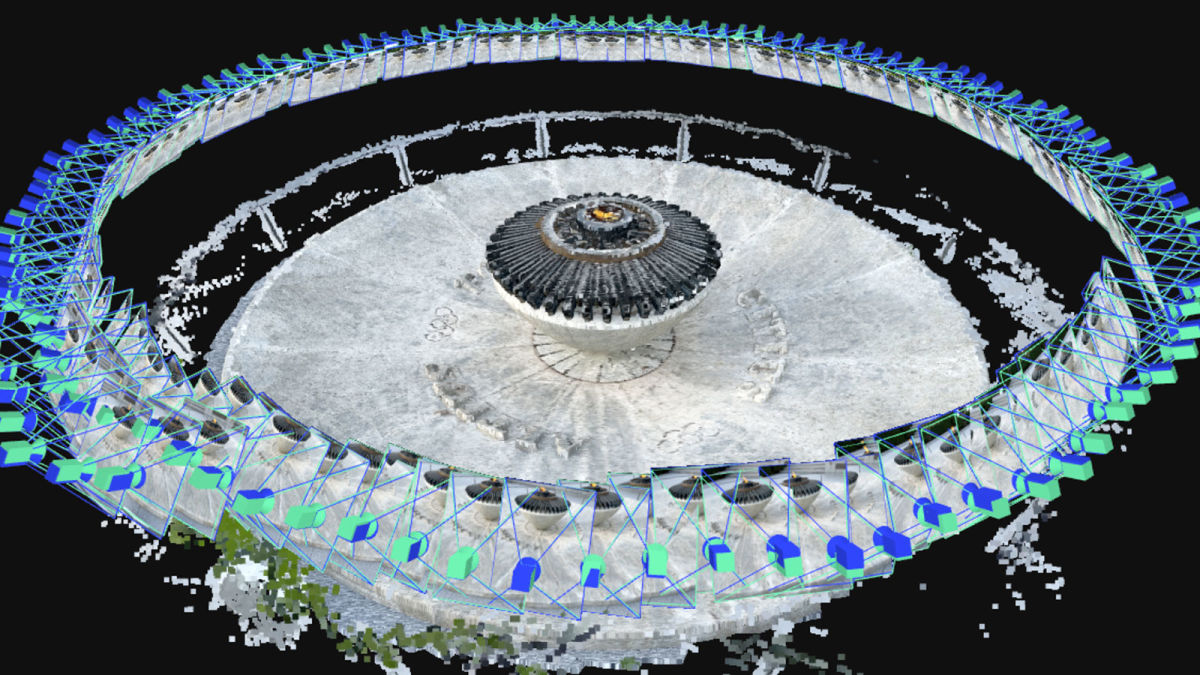

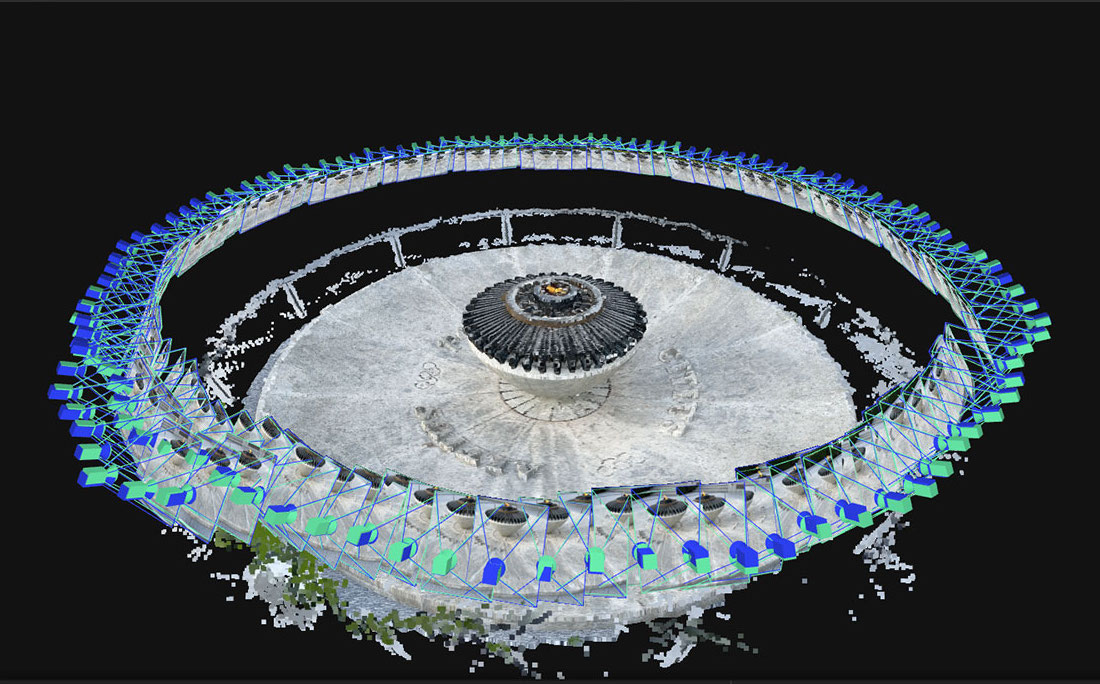

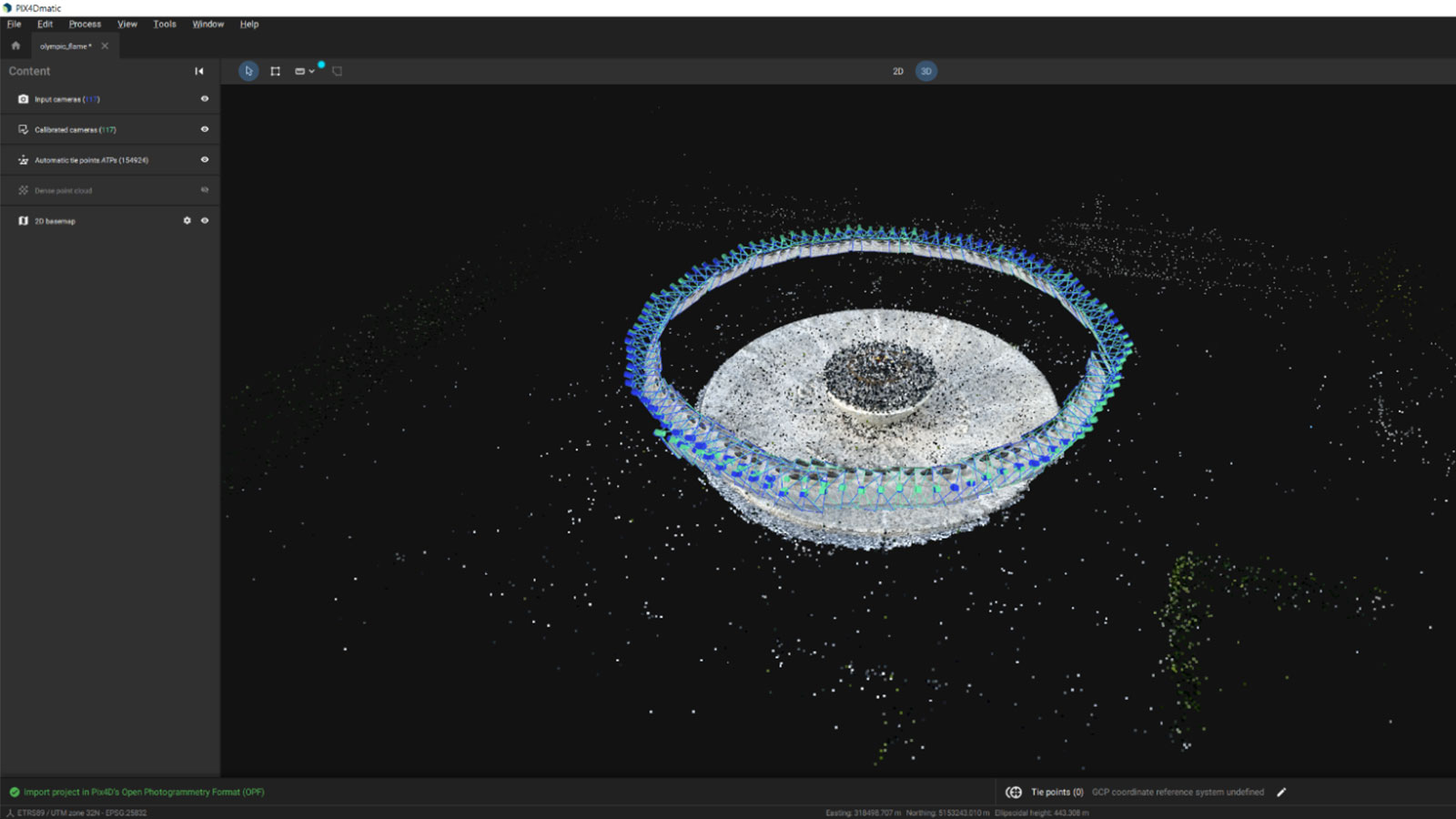

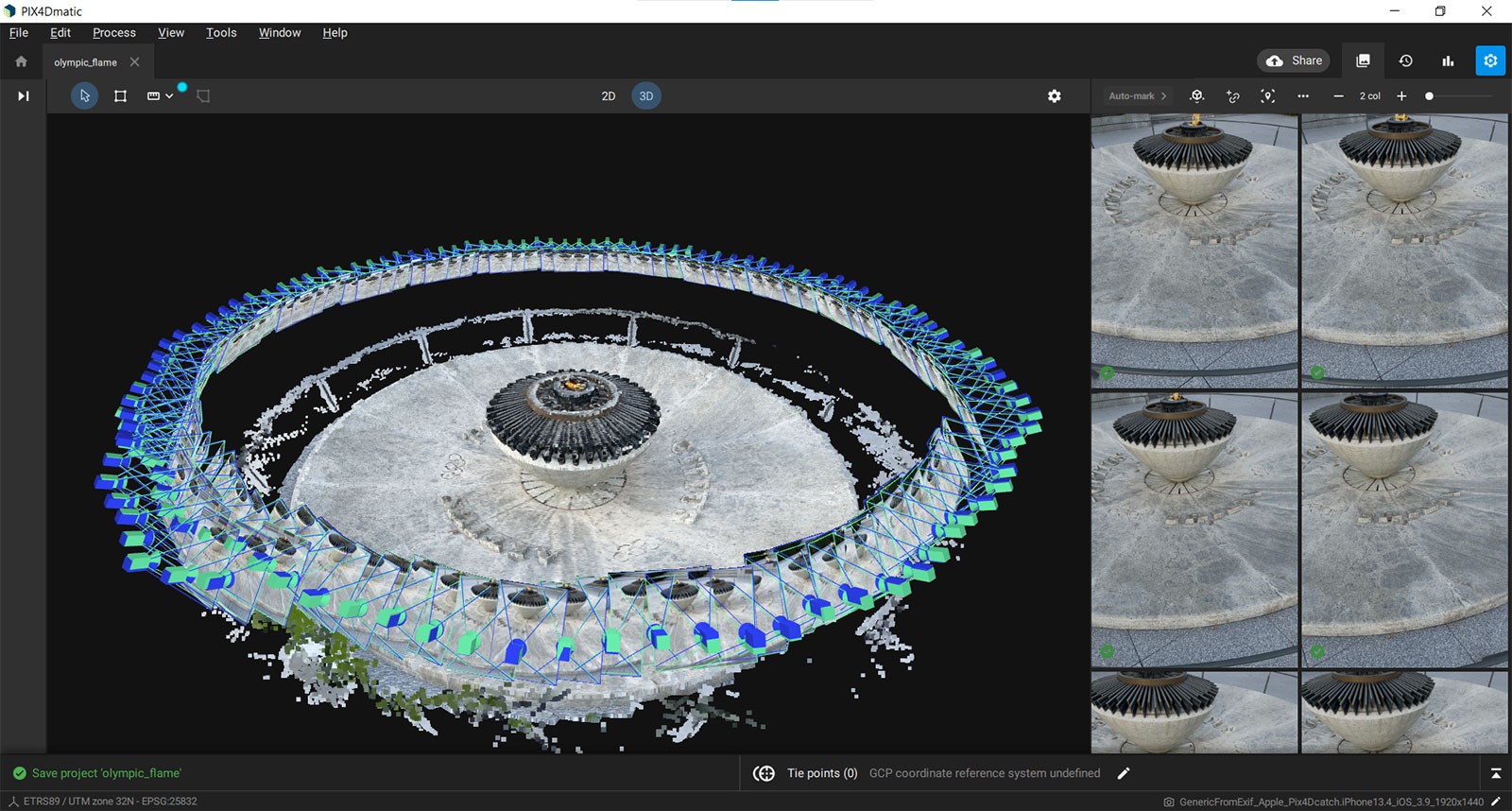

For this example, we went to the Olympic Park in Lausanne, Switzerland, to digitize the beautiful Olympic Flame proudly standing on its bronze and marble Cauldron. We used an iPhone to collect the images.

Along with image datasets, NeRF also requires calibration, accuracy, and reliability – an area PIX4Dmatic excels at. So back at the office, we simply downloaded the imagery data, launched PIX4Dmatic, imported all the images, and started the automatic processing.

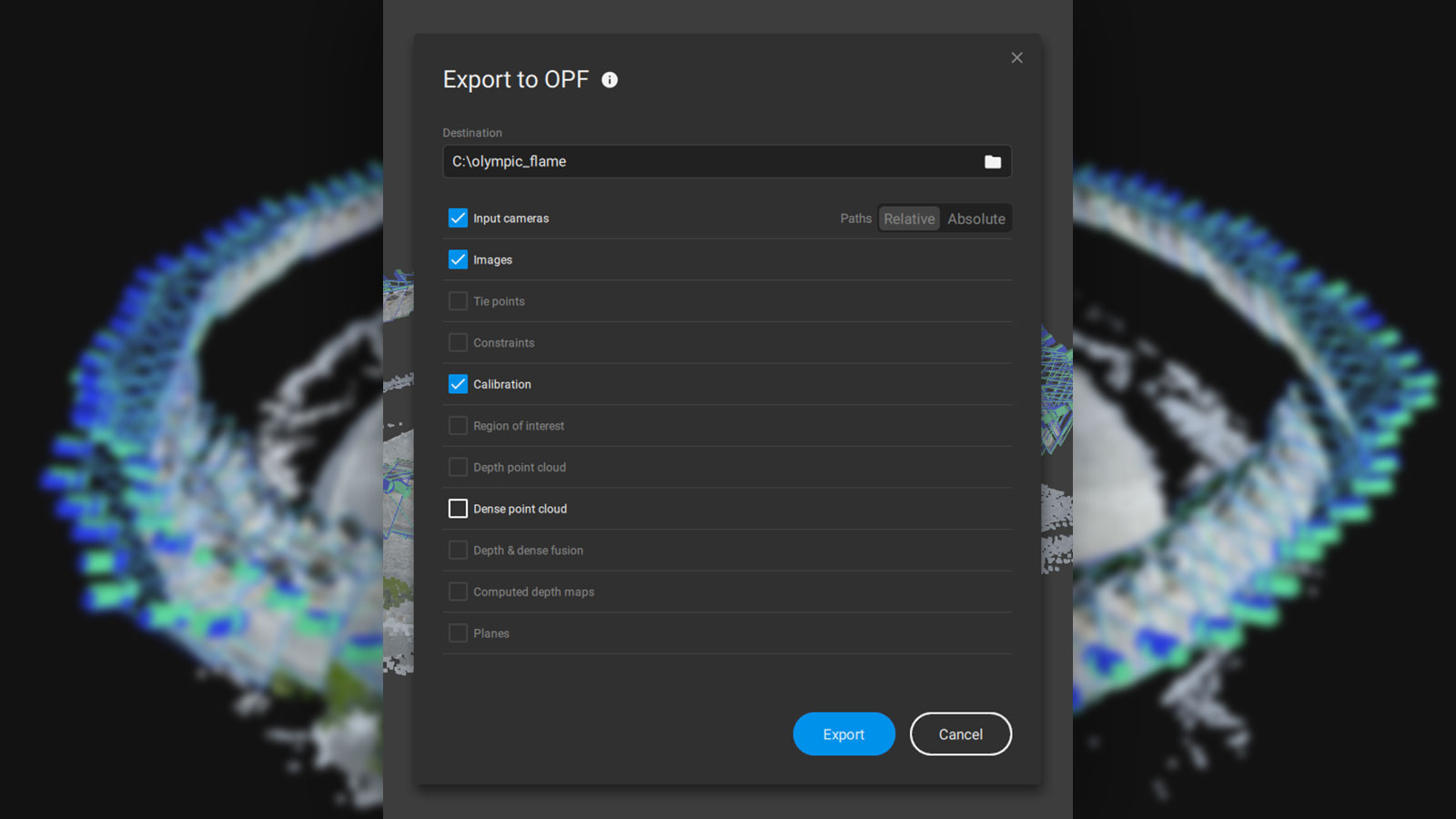

Once ready to convert the project to OPF, we used the PIX4Dmatic option “File/Export to OPF…”, selected an output folder, and we were done!

Leverage the power of OPF: using the pyopf library

To make things easy, Pix4D has provided a set of open source Python tools. This tooling contains a script that converts an OPF-calibrated dataset to the input required by NVIDIA Instant NeRFs.

Once you have Python (version 3.10 required) installed on your machine, pyopf can be installed using the standard “pip” installer. Use this command to install pyopf and its dependencies:

> pip install pyopf

From here, the OPF project can be converted to the input NVIDIA instant NeRFs, a popular NeRF rendering tool from NVIDIA, with the following command:

> opf2nerf path/to/OPF/project.opf --output-extension

This tool has some parameters to tweak the conversion. To get more information you can run “opf2nerf --help”. For those who would like to know more details, the full source code of this tool is also available for inspection. It can help you to understand how to read an OPF project and extract valuable information such as camera parameters.

Rendering with NeRF: Instant NeRFs by NVIDIA

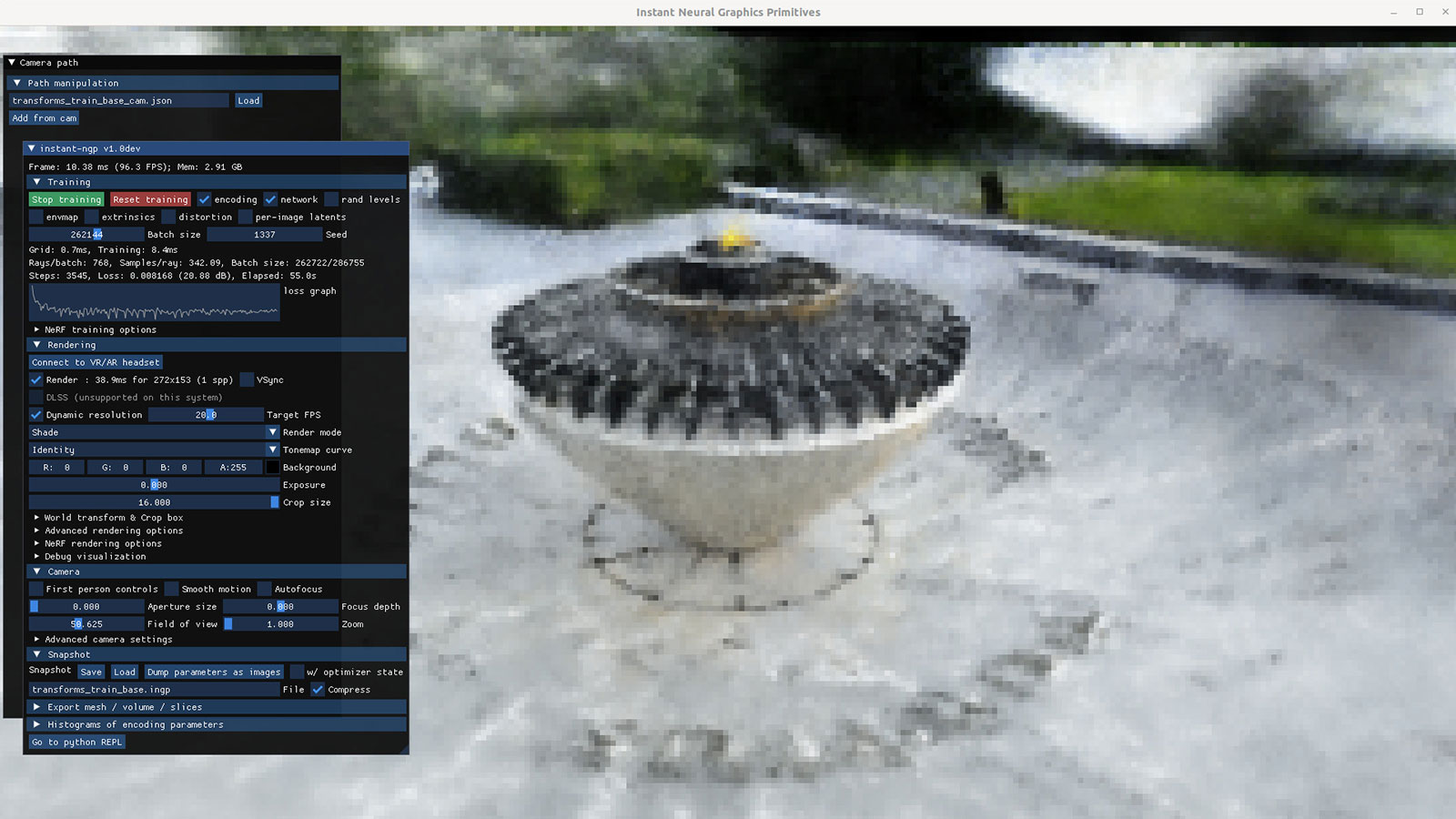

Once we had the OPF project converted to the input needed by NVIDIA Instant NeRFs, we were nearly ready for some visualization, but first, we needed to set up NVIDIA Instant NeRFs. Caution: A strong NVIDIA GPU is required. In this example, we used a recent NVIDIA GPU with 12GB of VRAM (for larger datasets this may be insufficient). Once this was set up, we could start the Instant NeRF training, using:

> instant-ngp path/to/generated/transforms_train.json

and a few minutes later we were able to generate our impressive final rendering video, pictured below:

OPF is an important step in making photogrammetry results more accessible for everyone. In this article, we showcased how using Pix4D resulted in visualization using a popular NeRFs implementation. We believe OPF has a lot of value for collaboration and data exchange, so we encourage researchers and professionals to leverage these technologies to push the boundaries of photogrammetry research. The combined power of Pix4Dcatch, Pix4Dmatic, and OPF opens up exciting opportunities for innovation, paving the way for future advancements in the field.

Got questions, or want to get involved? Join the conversation on LinkedIn and follow our hashtag #Pix4DLabs. Access this OPF project via this link or here.